About me

I am a third-year Ph.D. Student in the Robotics Perception and Learning Lab (RIPL) at  Georgia Tech, advised by Prof. Zsolt Kira. My research aims to improve the generalizability of foundation models, especially Vision–Language Models (VLMs). I am particularly interested in robust fine-tuning, reasoning, VLM-as-Judge and Vision–Language–Action Models (VLAs).

Georgia Tech, advised by Prof. Zsolt Kira. My research aims to improve the generalizability of foundation models, especially Vision–Language Models (VLMs). I am particularly interested in robust fine-tuning, reasoning, VLM-as-Judge and Vision–Language–Action Models (VLAs).

During Summer 2026, I am a Research Scientist Intern at  Adobe Research, working with Dr. Kushal Kafle on VLM-as-Judge.

Adobe Research, working with Dr. Kushal Kafle on VLM-as-Judge.

During Spring and Summer 2023, I was a Research Scientist Intern at  Microsoft Research Asia, working on AI4Science.

Microsoft Research Asia, working on AI4Science.

I graduated with BS in Statistics from  Renmin University of China. I was fortunate to work with Prof. Hongteng Xu in Structured Data Science Lab (SDSL).

Renmin University of China. I was fortunate to work with Prof. Hongteng Xu in Structured Data Science Lab (SDSL).

You can find more details in my CV here.

News

- [2026.05] I will be joining

Adobe Research as a Research Scientist Intern, working with Dr. Kushal Kafle on VLM-as-Judge! See you in Bay Area!

Adobe Research as a Research Scientist Intern, working with Dr. Kushal Kafle on VLM-as-Judge! See you in Bay Area! - [2026.05] SafeManip is online! The first property-driven benchmark for temporal safety evaluation in robotic manipulation.

- [2026.03] Selected as a Qualcomm Innovation Fellowship Finalist with my amazing teammate Mellon Zhang!

- [2026.02] MAPS was accepted to CVPR 2026! See you in Denver!

- [2025.06] Mimicking or Reasoning was featured on YouTube by Discover AI!

- [2025.04] I received the CVPR 2025 Travel Support Award, thanks! See you in Nashville!

- [2025.04] I passed my Qualifying Exam!

- [2025.02] FRAMES-VQA was accepted to CVPR 2025!

- [2025.01] DiGraP was accepted to ICLR 2025!

- [2023.08] I joined Georgia Institute of Technology for the Machine Learning PhD Program!

Selected Publications

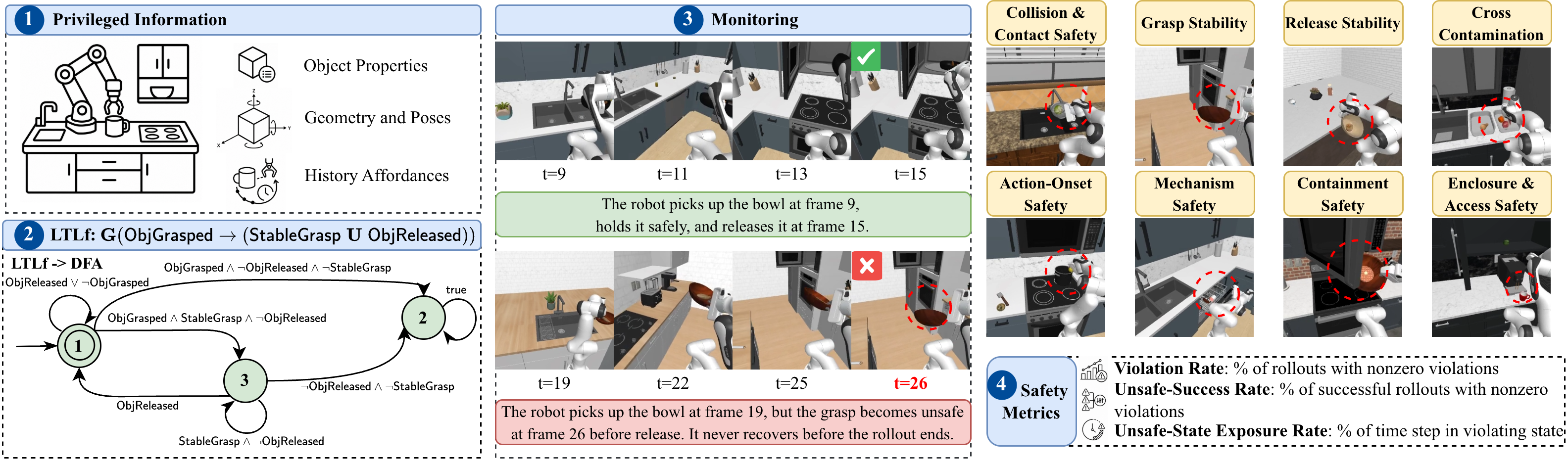

SafeManip: A Property-Driven Benchmark for Temporal Safety Evaluation in Robotic Manipulation

arXiv 2026

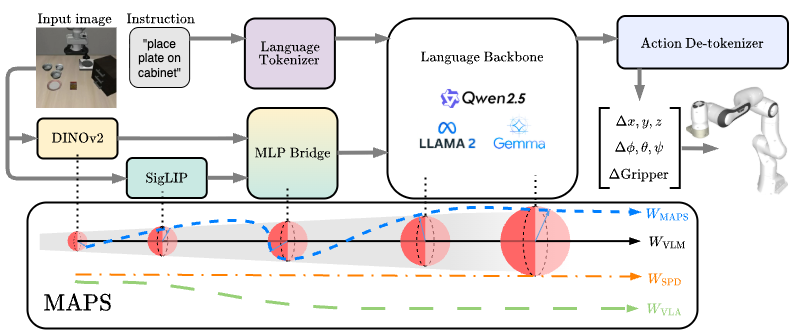

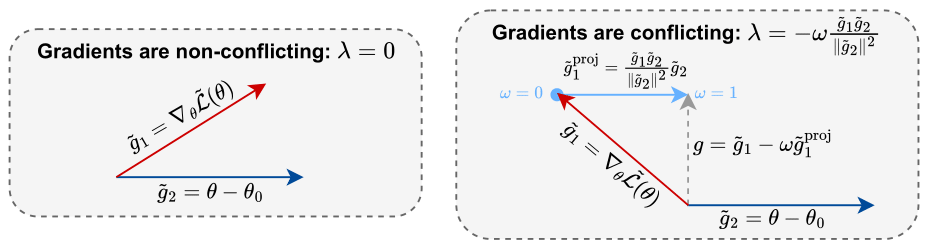

MAPS: Preserving Vision-Language Representations via Module-Wise Proximity Scheduling for Better Vision-Language-Action Generalization

CVPR 2026

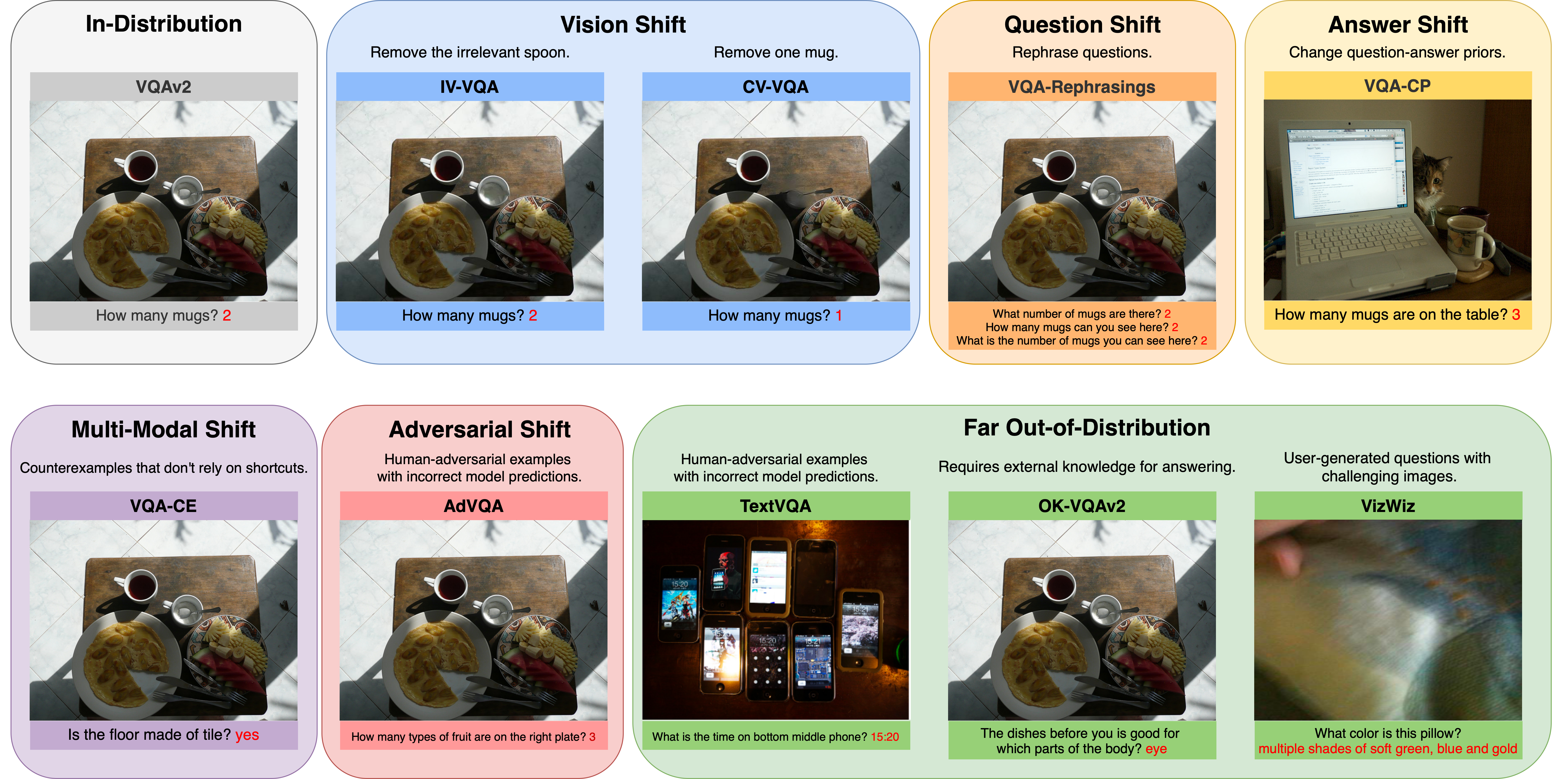

FRAMES-VQA: Benchmarking Fine-Tuning Robustness across Multi-Modal Shifts in Visual Question Answering

CVPR 2025